A customer calls your support line. They say: “I understand, thank you for explaining.” The words are polite. Cooperative, even. But the pace of their speech has slowed. Their tone is flat. They have not interrupted the agent once in twelve minutes, which is unusual for someone who opened the call angry.

Are they satisfied? Still frustrated but giving up? Resigned? All three are possible, and they lead to very different actions on your end.

This is the problem that voice sentiment analysis is trying to solve. And it is a genuinely hard problem, which is why understanding what it can and cannot do matters more than most vendor descriptions suggest.

What Sentiment Analysis in Voice Actually Measures

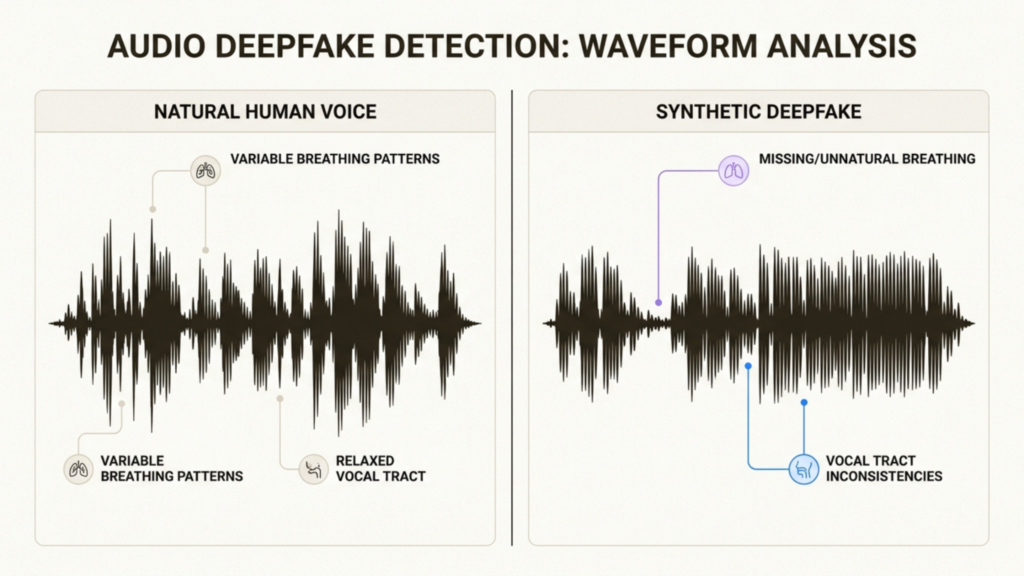

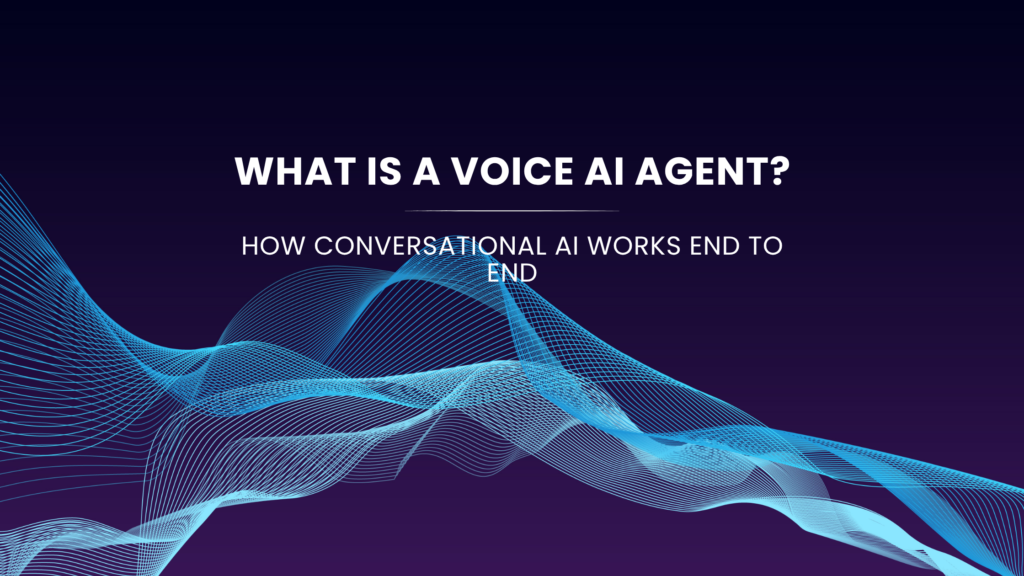

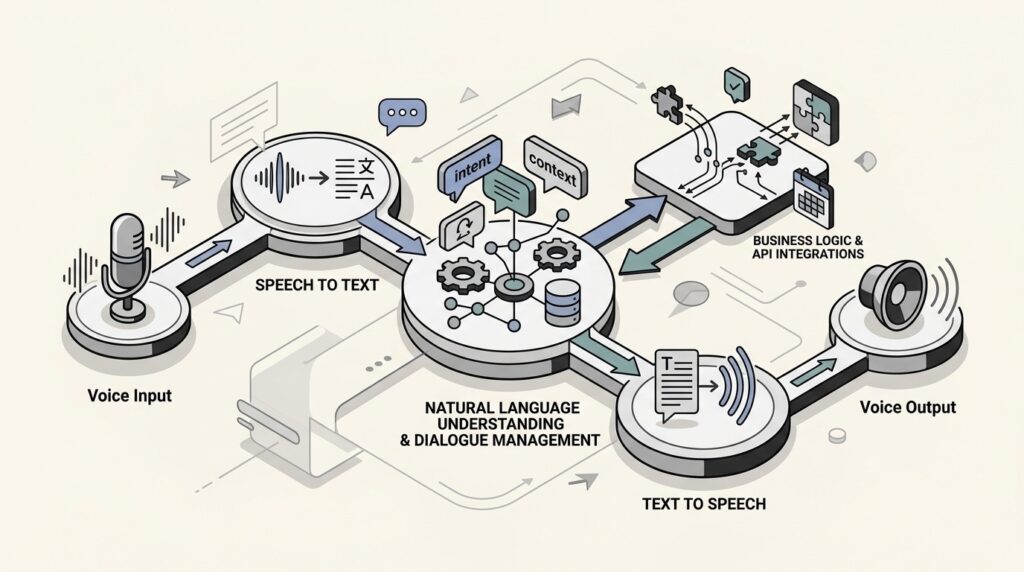

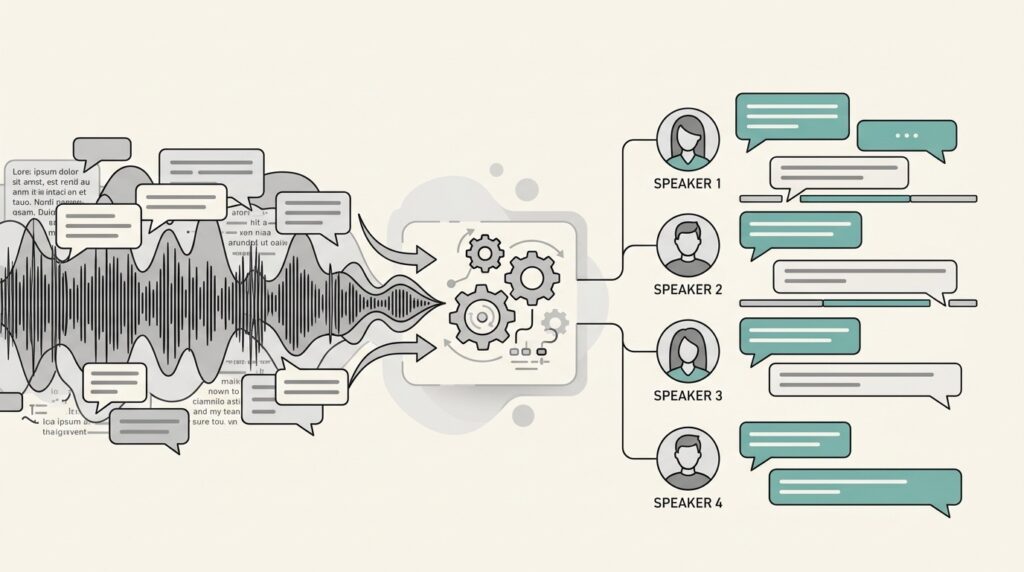

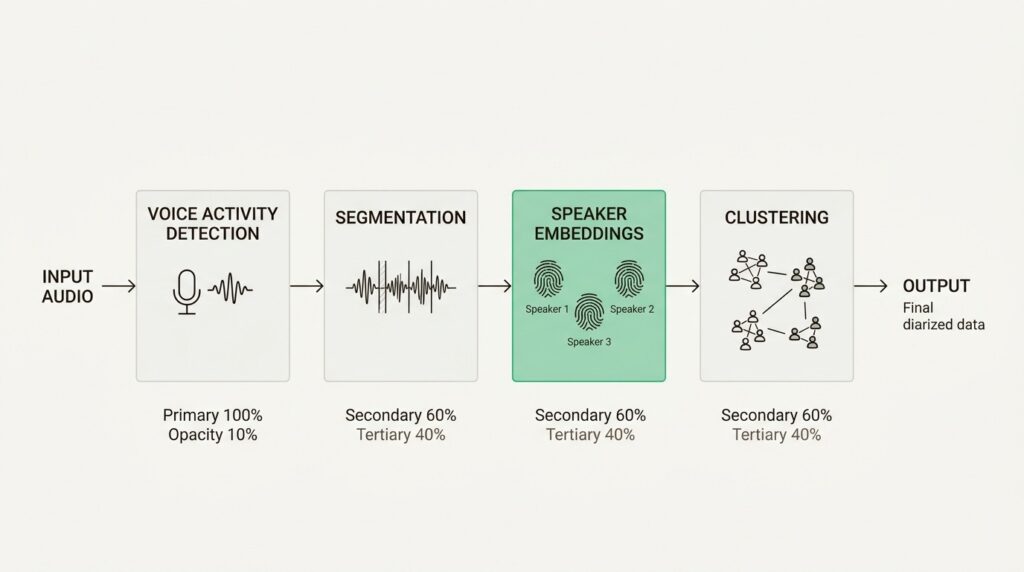

Sentiment analysis on voice data works across two channels simultaneously: the words being spoken and the acoustic properties of the audio itself.

The text channel looks at the transcript. Words like “frustrated,” “disappointed,” “confused,” “excellent,” and “finally resolved” carry obvious sentiment signals. But the more useful signals are subtler: hedging language (“I suppose that’s fine”), repeated requests for clarification (which can suggest confusion or distrust), and explicit refusals (“I already tried that”) that can indicate friction even when delivered calmly.

The acoustic channel looks at features of the audio signal that are independent of the words. Speech rate is one of the strongest signals. People tend to speak faster when agitated and slower when emotionally withdrawn or resigned. Pitch variation matters: highly varied pitch often accompanies frustration or emphasis, while flat pitch can indicate either calm or disengagement. Pause length, speaking volume, and the ratio of overlapping speech to listening time all contribute to the acoustic picture.

A well-designed sentiment system combines both channels. Text alone can miss tone. Audio alone can miss content. Together they give a picture that neither can provide independently.

Shunya Labs’ sentiment analysis feature works on this combined basis, producing sentiment labels and scores at the utterance level so you can track how a conversation moves over time rather than collapsing it into a single end-of-call score.

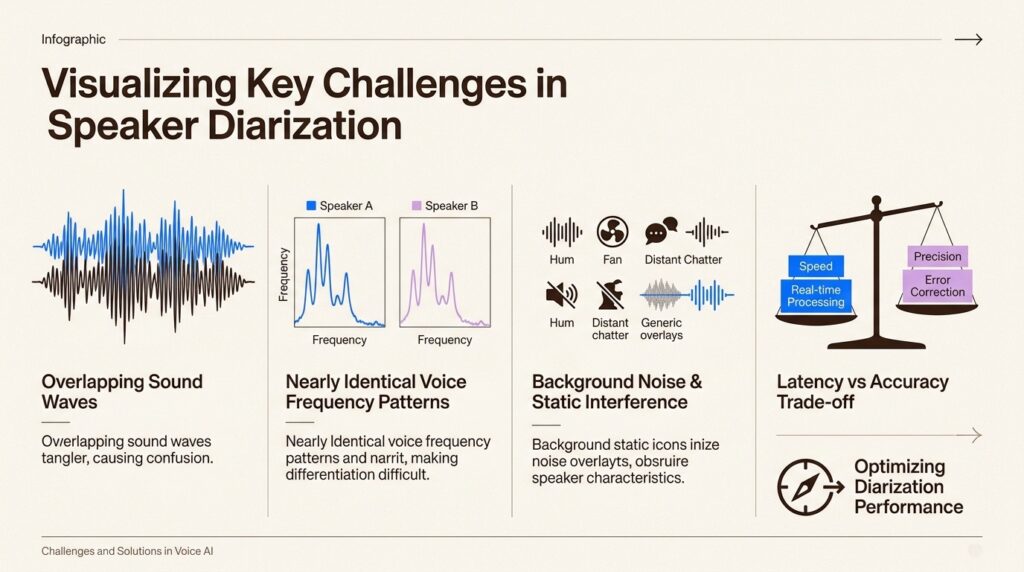

Why It Is Harder Than It Looks

Language is not a reliable carrier of feeling

Sarcasm is the obvious example. “Oh, that’s just great” means exactly the opposite of what the words say. Understatement is common in British English. Extreme politeness in many South and East Asian communication styles can mask serious dissatisfaction. Indirect complaint, where a speaker describes a problem without framing it as one, is how many people actually communicate frustration.

Sentiment models trained on direct, English-first datasets tend to underperform on communication styles that rely on indirection, politeness conventions, or cultural norms around emotional expression.

This matters especially in multilingual products. A model calibrated on English call data may read a deferential Hindi-speaking caller as satisfied when they are not. The courtesy is real. The satisfaction is not.

The same words carry different weight in different contexts

“I have been waiting for three weeks” carries a different sentiment depending on whether the speaker says it at the start of a call or after being told the issue is now resolved. Context within the conversation matters enormously, and many sentiment systems score utterances in isolation rather than as part of a conversational arc.

Similarly, professional callers, insurance adjusters, B2B procurement teams, experienced customer service escalations tend to use flatter, more controlled language regardless of how they actually feel. Sentiment scoring trained on general consumer calls will consistently underestimate negative sentiment in these interactions.

Short utterances produce unreliable scores

“Yes.” “Okay.” “Fine.” These words appear constantly in phone conversations. Each one is essentially unscoreable in isolation. Whether “fine” is dismissive, accepting, or genuinely content depends entirely on the surrounding conversation, the tone, and what just happened before it was said.

Sentiment systems that report a label for every utterance without a confidence qualifier produce a lot of noise on these short exchanges. The practical consequence is that aggregate sentiment scores for a call can shift significantly based on how many one-word responses it contained, not just on what the emotionally significant moments were.

Where Sentiment Analysis Actually Delivers Value

Given those constraints, there are specific use cases where voice sentiment analysis earns its place in a product.

Escalation detection in real time

The most operationally valuable use of live sentiment analysis is identifying calls that are heading toward escalation before the customer asks for a supervisor. A caller whose sentiment has tracked from neutral to mildly negative to sharply negative over the first five minutes is a different situation from one who opened the call annoyed but has been steadily moving toward resolution.

Real-time sentiment scoring feeds agent assist panels with this trajectory information. The agent sees a signal that the conversation is deteriorating, and can adjust the approach or flag for supervisor involvement before the caller demands it. This has a direct impact on escalation rates and handle time.

Shunya Labs’ contact centre integration includes real-time speech intelligence for exactly this workflow, sentiment signals that surface during the call, not just in post-call analytics.

Post- call QA prioritisation

Call centres that record every call face a practical problem: no one has time to review all of them. Quality assurance teams typically sample a small percentage and manually evaluate them. Sentiment scoring applied to the full call archive lets you invert this. Instead of random sampling, you can surface the calls where sentiment dropped sharply, recovered unusually fast, or followed patterns associated with poor resolution outcomes.

This means QA time goes toward the calls that actually need attention. Agents get feedback on the interactions where coaching has the highest impact. And patterns that would be invisible in a random sample, a product issue that consistently produces frustrated callers, for instance, or a script segment that reliably generates negative sentiment spikes, become visible across the whole dataset.

Customer satisfaction prediction before the survey

Post-call satisfaction surveys capture a small fraction of actual call outcomes. Most customers do not fill them in, and those who do skew toward strong responses in either direction. Sentiment scores from the call itself provide a proxy satisfaction signal for the full call population, not just the survey respondents.

This is not a replacement for surveys. It is a way to understand whether your survey data is representative, to identify calls where survey non-response may be hiding a quality problem, and to track satisfaction trends over time without depending on voluntary feedback.

Agent coaching and performance tracking

Sentiment analysis across an agent’s calls over time tells a different story than any single call. An agent who consistently sees sentiment drop when explaining billing policies may need support on that specific topic. One whose calls show strong sentiment improvement in the second half of a conversation is handling recovery well and should probably be teaching that skill to others.

This kind of coaching signal is hard to get from call scoring rubrics, which measure what agents say rather than how customers respond to it. Sentiment scoring adds the customer-response dimension to agent performance data.

Where It Can Struggle and What to Do About It

Do not use it as a standalone satisfaction metric

A sentiment score is not a CSAT score. Treating it as one will produce misleading results. Customers can have a frustrating interaction that ends with a resolution they are happy about. They can have a pleasant interaction that does not solve their problem. The correlation between in-call sentiment and post-call satisfaction exists but it is not tight enough to substitute one for the other.

Use sentiment alongside outcome data, was the issue resolved, did the customer call back within 72 hours, did they cancel, to build a more complete picture.

Calibrate for your specific customer population

A sentiment model built on broad consumer call data needs calibration before it performs reliably on your particular customer base. B2B callers communicate differently from B2C callers. Healthcare patients communicate differently from retail customers. Multilingual callers using code-switched speech communicate differently from monolingual callers.

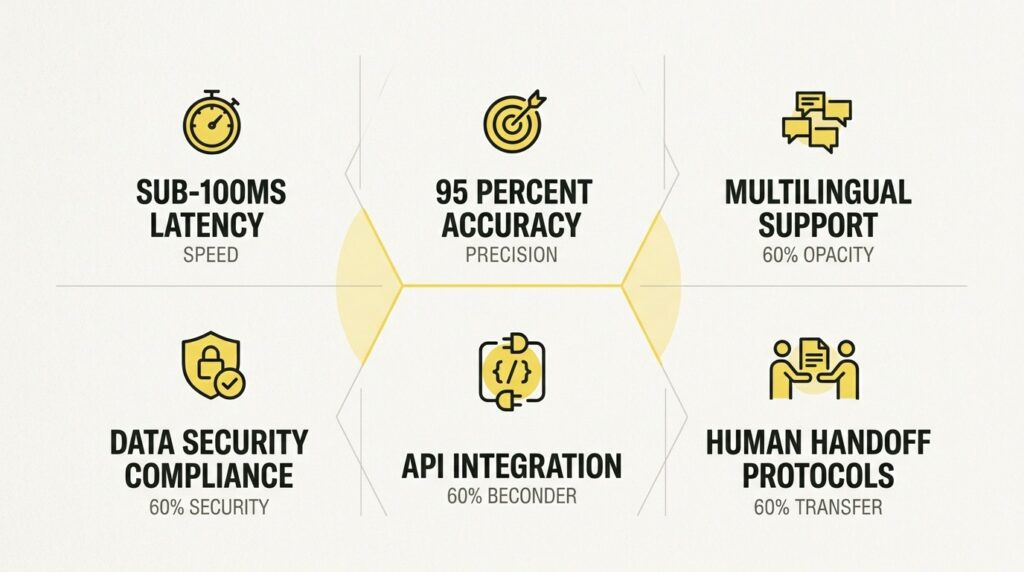

At Shunya Labs, the sentiment feature works on transcribed speech, which means it benefits directly from the accuracy of the underlying transcription. A model that transcribes mixed-language speech correctly produces better sentiment signals than one that mishears or drops words, because the text channel of the sentiment analysis depends on the words actually being right.

Track sentiment trajectory, not just endpoint

A call that starts at -0.8 sentiment and ends at +0.3 is a successful recovery. A call that starts at +0.2 and ends at -0.6 is a problem that developed during the interaction. A call that sits at 0.0 throughout might be efficient and neutral, or it might be a customer who gave up engaging.

The point is that the arc of the conversation matters more than any single number. Good sentiment tooling surfaces the trajectory, not just the score.

A Realistic Expectation

Voice sentiment analysis is genuinely useful. It surfaces patterns that would otherwise require listening to every call, which no team can do at scale. It provides early warning signals for conversations going wrong. It makes QA more efficient and coaching more targeted.

What it cannot do is replace human judgment on individual calls, accurately interpret every cultural communication style, or produce meaningful scores on very short utterances without additional context.

The teams that get the most from it treat it as one input into a broader picture: sentiment alongside intent, alongside resolution outcome, alongside silence rate and call duration. No single signal tells you how a conversation went. But several signals together tell you a great deal.

Shunya Labs’ speech intelligence suite combines sentiment analysis with intent detection, emotion diarization, speaker diarization, and summarisation, precisely because useful call intelligence comes from combining signals, not from any one feature alone. If you want to see how sentiment analysis performs on your own call audio, you can test it directly in the playground or explore the full documentation at docs.shunyalabs.ai.