Picture this: Your contact center handles calls in Hindi, Tamil, and English—sometimes all three in the same conversation. Your current speech-to-text system transcribes the English perfectly, mangles the Hindi, and completely gives up when customers code-switch mid-sentence. Sound familiar?

You’re not alone. Most multilingual ASR (Automatic Speech Recognition) systems face a tradeoff: cover more languages and watch accuracy collapse, or stay accurate in a handful of languages and leave most of your users behind.

At Shunya Labs, we built Zero STT to break that tradeoff—delivering production-grade accuracy across 200+ languages without the lag, cost, or complexity that usually comes with multilingual voice AI. Here’s how we did it, and why it matters for teams shipping voice features in contact centers, media, healthcare, and beyond.

The Problem: Why Most Multilingual ASR Systems Struggle

Traditional multilingual speech recognition systems force you to choose your pain:

Option A: Broad coverage, poor accuracy. Systems that claim to support 100+ languages often deliver mediocre results across all of them—especially on the “long-tail” languages that matter most to your users.

Option B: High accuracy, narrow coverage. Language-specific models work great for English or Mandarin, but leave you scrambling to patch together solutions for regional languages, accents, and code-mixing.

Option C: Good accuracy and coverage, but painfully slow. Some systems achieve both breadth and precision by using massive models that take seconds to transcribe short utterances—useless for real-time applications like live captioning or voice assistants.

The core issue? Most multilingual models are trained on massive, undifferentiated datasets where Hindi street noise gets the same weight as studio-quality English recordings. The model learns everything equally—which means it masters nothing that matters.

Understanding the Tradeoffs: What You’re Actually Measuring

Before we explain how Zero STT solves this, let’s break down the two fundamental tensions in multilingual ASR—and the metrics that reveal them.

Tension #1: Accuracy ↔ Versatility

The problem: When you ask a fixed-size model to cover many languages, its “parameter budget” per language shrinks. This phenomenon—called the “curse of multilinguality”—means that per-language accuracy often drops as coverage increases.

Think of it like hiring one person to speak 50 languages versus hiring 50 native speakers. The generalist will miss nuances.

Concrete example: OpenAI’s Whisper offers both English-only and multilingual checkpoints. The English-only version consistently outperforms the multilingual version on English audio, while the multilingual version wins on breadth. That’s the tradeoff in action.

How accuracy is measured:

- Word Error Rate (WER): The industry-standard metric. It counts substitutions, deletions, and insertions against the reference transcript. A WER of 5% means the system gets 95 out of 100 words correct. Lower is better.

- Character Error Rate (CER): Useful for languages where “word” boundaries are fuzzy (like many Asian scripts). It measures edit distance at the character level. Also lower is better.

What to watch for: Don’t just look at headline WER numbers. Ask about performance on your specific languages, accents, and domains. A model with 3% WER on clean English might hit 20% WER on accented Hindi or code-mixed Hinglish.

Tension #2: Versatility ↔ Latency

The problem: Streaming ASR (the kind that transcribes speech as you speak) must emit words quickly with limited look-ahead. Less future context keeps latency low but hurts accuracy. More look-ahead improves accuracy but adds delay—making the system feel sluggish.

For multilingual systems, this tension intensifies. Juggling multiple scripts and phonetic patterns often requires either larger context windows (raising latency) or careful architectural tricks to keep latency steady without losing accuracy.

How latency is measured:

- Real-Time Factor (RTF): Processing time divided by audio duration. RTF < 1 means faster than real-time (good). RTF = 1 is exactly real-time. RTF > 1 means the system can’t keep up.

- Time to First Token (TTFT): The delay from when someone starts speaking to when the first word appears. This drives perceived “snappiness”—crucial for conversational AI.

- Endpoint latency: The delay from when someone stops speaking to when the final transcript appears. Usually reported as P50/P90/P95 percentiles.

What to watch for: Vendors love to report best-case RTF on high-end GPUs. Ask about P95 latency on your target hardware (often commodity CPUs) and real-world network conditions. Small differences here destroy user experience.

Our Solution: Training on High Entropy Indic Data

Here’s where Zero STT diverges from conventional multilingual ASR.

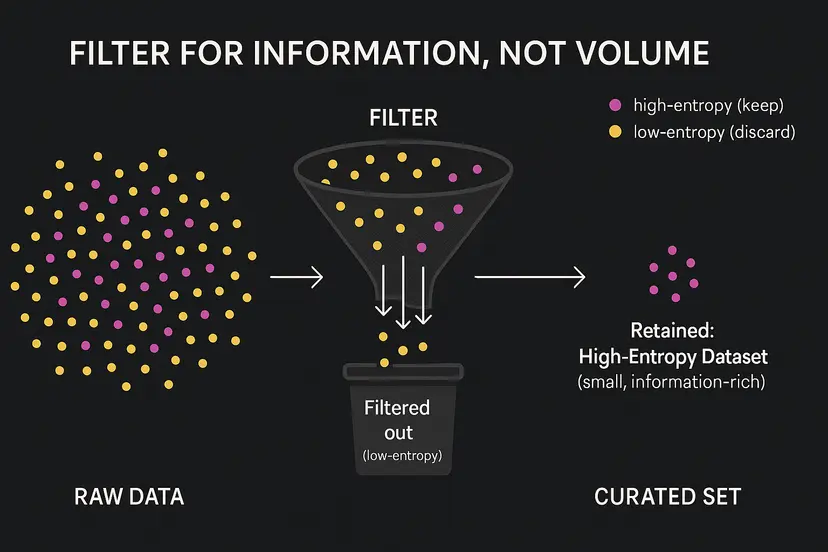

Instead of training on every available hour of audio, we curate our training data based on information density—what we call “high-entropy” samples. Each audio clip gets scored on four dimensions:

- Acoustic entropy: Is the audio noisy, reverberant, or captured on low-quality devices? These “hard” conditions force the model to generalize better.

- Phonetic entropy: Does it contain rare sounds or unusual sound combinations? This helps with accents and dialectal variation.

- Linguistic entropy: Does it use uncommon vocabulary, syntax, or jargon? This improves performance on domain-specific language (medical terms, legal jargon, brand names).

- Contextual entropy: Does the audio-text pair contain strong predictive signals—like code-mixing (Hinglish, Tanglish) or proper nouns?

We keep high-surprise samples and remove redundant samples using a threshold that increases exponentially across training rounds. Think of it as teaching a student with increasingly challenging problems, not endless repetition of easy ones.

Why this works in practice

Hard audio becomes easy in production. By training on noisy and device-diverse clips, the model doesn’t need extra look-ahead to stay accurate in real-world conditions. The result is streaming-grade latency without giving up accuracy.

High linguistic entropy means fewer breakdowns on real speech. Indic languages are inherently higher entropy—rich morphology and agreement, multiple grammatical genders, and flexible word order (often SOV with variations). Training on this structural diversity exposes the model to many “difficult” cases (surprises), so it learns more efficiently, stays lighter, and performs better under uncertainty.

Compute efficiency with state-of-the-art accuracy. Our entropy-guided pruning focuses training on information-dense hours instead of brute-force scale, reaching 3.10% WER on our universal model. For full results, see our benchmarks.

Real-time serving at scale. The models are engineered for streaming-grade latency and faster-than-real-time throughput on standard GPU tiers, so you can ship responsive captions and agents without exotic hardware.

Breadth that holds up. Where many stacks look great on one or two head languages and then slip, our multilingual models stay reliable across diverse languages—including Indic—because the training data preserves the right diversity, not just more of the same.

What This Means for You

For Contact Centers

- Handle code-mixed conversations (English ↔ Hindi, Tamil ↔ English)

- Transcribe noisy call-center audio accurately without expensive noise-cancellation preprocessing

- Run on-premises for compliance without sacrificing speed or accuracy

For Media & News

- Live-caption multilingual broadcasts with sub-second latency

- Transcribe field recordings with background noise and cross-talk

- Support regional languages without maintaining separate pipelines

For Healthcare

- Accurately capture medical terminology across languages

- Run offline for patient privacy (HIPAA/GDPR compliance)

- Transcribe doctor-patient conversations with code-mixing and accents

For Developers

- Deploy on commodity CPUs—no GPU vendor lock-in

- Privacy-first architecture: on-prem, offline, or cloud

Getting Started with Zero STT

One question we get often: “What is code-mixing, and why should I care?” Code-mixing is when speakers alternate between languages mid-conversation—like “Today ka meeting postpone ho gaya hai” (mixing English and Hindi). It’s extremely common in multilingual regions, from Mumbai call centers to Singapore offices, but it breaks most ASR systems. They’re trained on clean, monolingual speech and simply don’t know what to do when someone switches languages mid-sentence.

Zero STT handles code-mixing natively because our high-entropy training specifically includes these mixed-language scenarios. We don’t treat them as edge cases—they’re the norm for millions of users.

How does this compare to the big cloud providers? While services like Google Cloud Speech-to-Text and AWS Transcribe offer broad language coverage, they’re cloud-only and can struggle with code-mixing and long-tail languages. Zero STT matches or exceeds their accuracy on Indic languages while giving you the flexibility of on-prem deployment, offline operation for data privacy (GDPR, HIPAA compliant), and lower latency on commodity hardware—no expensive GPU infrastructure required.

Ready to see it in action?

Test Zero STT in your browser right now. Switch between languages, upload your own audio clips (noisy call recordings, accented speech, code-mixed conversations), and see how the model performs under real conditions. Launch Demo for Zero STT →

Browse our full list of 200+ supported languages, integration guides, and API reference in our documentation. View Zero STT Documentation →

The Bottom Line

Multilingual ASR doesn’t have to mean choosing between accuracy, speed, and coverage. By training on high-entropy data—especially the messy, real-world audio that reflects actual user conditions—Zero STT delivers all three.

Whether you’re building voice features for a contact center in Mumbai, a newsroom in Jakarta, or a telemedicine platform in Manila, you need ASR that works on the audio your users actually produce: noisy, accented, code-mixed, and real.

That’s what we built.

Evaluating Zero STT for your organization? Reach out to us and talk to an expert for your use case. Book a meeting →

Leave a Reply

You must be logged in to post a comment.